What Are the Best VMware Alternatives for Virtualization & VDI?

VMware alternatives help organizations reduce virtualization costs, simplify infrastructure management, and support cloud or hybrid deployments. Popular options include Apporto, Proxmox VE, Microsoft Hyper-V, Nutanix, and Azure Stack HCI, each offering different strengths in virtual desktops, virtualization, scalability, and operational efficiency.

VMware has long been a leader in virtualization, but the Broadcom acquisition has prompted many organizations to reconsider their infrastructure strategy. Rising licensing costs, subscription-based pricing, and hidden costs have increased operational expenses for businesses that rely on VMware environments.

As a result, more than half of VMware customers are now evaluating VMware alternatives. The goal is simple replace VMware with a platform that offers better value without sacrificing reliability. This guide examines the best VMware alternatives based on cost, scalability, operational simplicity, and migration flexibility.

How Did We Select the Best VMware Alternatives?

No two organizations approach virtualization the same way. A midsize business looking to reduce licensing costs has very different priorities than an enterprise managing thousands of virtual machines across multiple locations. That’s why this list focuses on practical evaluation criteria rather than marketing claims.

Each platform was assessed based on its virtualization capabilities, ease of migration from existing VMware environments, pricing transparency, disaster recovery options, high availability features, and support for cloud or hybrid deployments. Long-term scalability was also a major consideration, especially for organizations planning future growth or supporting critical workloads.

The goal wasn’t simply to identify a VMware replacement. It was to find solutions that can reduce complexity, improve operational efficiency, and provide a sustainable foundation for the years ahead.

Licensing Model: Preference was given to platforms with predictable pricing structures and lower operational expenses.

Migration Simplicity: Solutions offering automated tools, migration assistance, or proven migration strategies received higher rankings.

Operational Efficiency: Platforms that reduce operational overhead and simplify infrastructure management were prioritized.

Scalability: The ability to support future growth, evolving business requirements, and critical workloads was a key consideration.

Quick Comparison Table: Which VMware Alternative Fits Your Environment Best?

Before diving into the individual reviews, it’s worth taking a high-level look at how these platforms compare. Some alternatives focus on reducing licensing costs through open-source technologies, while others prioritize hybrid cloud capabilities, integrated infrastructure, or simplified management. The right choice depends on your existing environment, technical resources, and long-term infrastructure goals.

The table below provides a quick snapshot of each platform’s primary strengths, deployment model, pricing approach, and differentiating feature.

| Platform | Best For | Deployment Model | Pricing Model | Standout Feature |

|---|---|---|---|---|

| Apporto | Browser-based VDI & DaaS | Cloud / Hybrid | Custom | Zero-install virtual desktops |

| Proxmox VE | Open-source virtualization | On-Prem | Free + Support | KVM + LXC integration |

| Microsoft Hyper-V | Windows environments | On-Prem / Hybrid | Included with Windows Server | Native Microsoft integration |

| Azure Stack HCI | Hybrid cloud infrastructure | Hybrid | Subscription | Azure integration |

| Nutanix | Hyperconverged infrastructure | Hybrid | Custom | Nutanix AHV |

| Red Hat OpenShift | VM + Containers | Hybrid / Cloud | Subscription | OpenShift Virtualization |

| XCP-ng | Open-source hypervisor | On-Prem | Free + Support | Xen-based virtualization |

| Citrix Hypervisor | Virtual desktop deployments | On-Prem | Custom | Citrix ecosystem |

| Oracle VM / OLVM | Oracle workloads | On-Prem / Hybrid | Custom | Oracle optimization |

Best Vmware alternatives

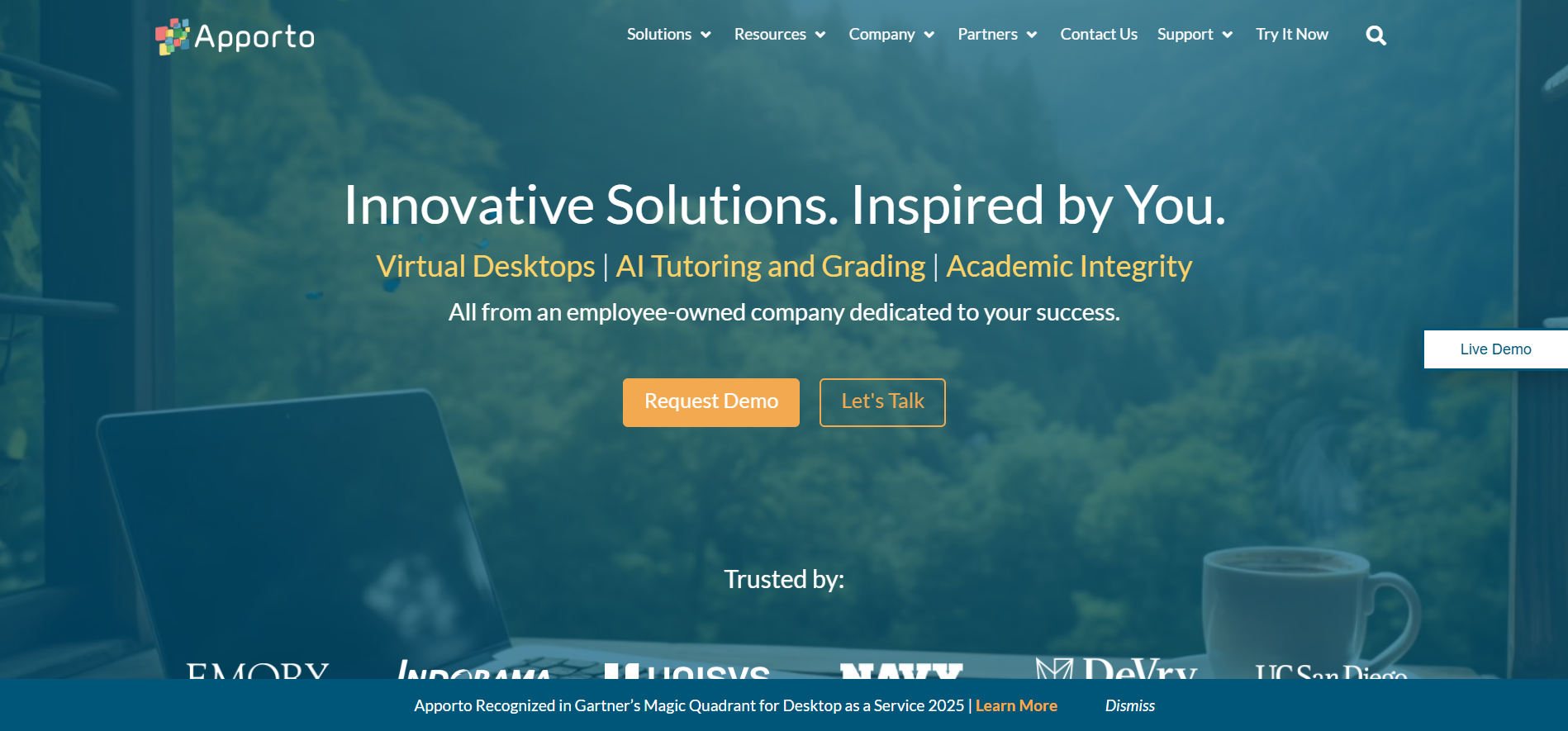

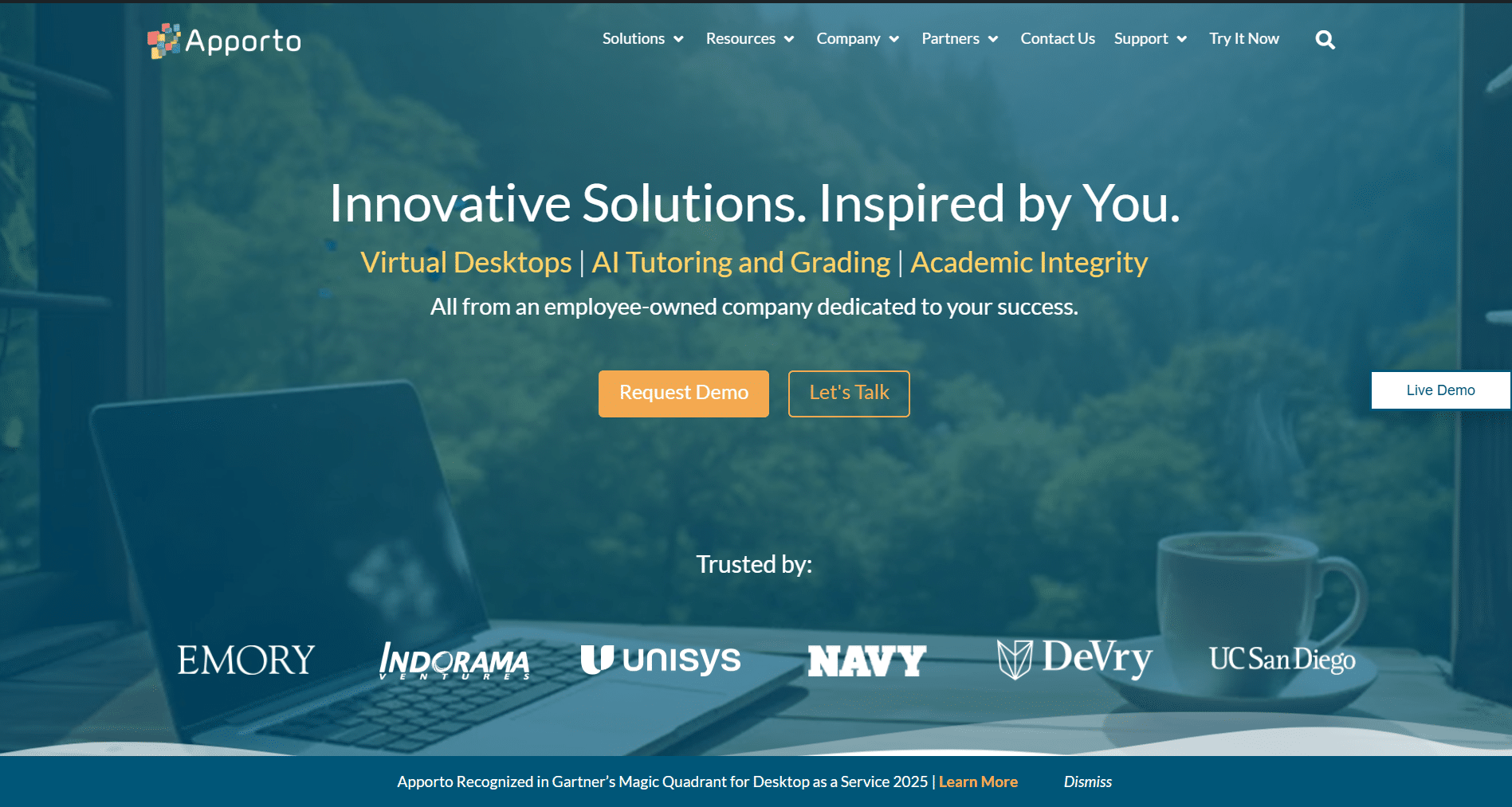

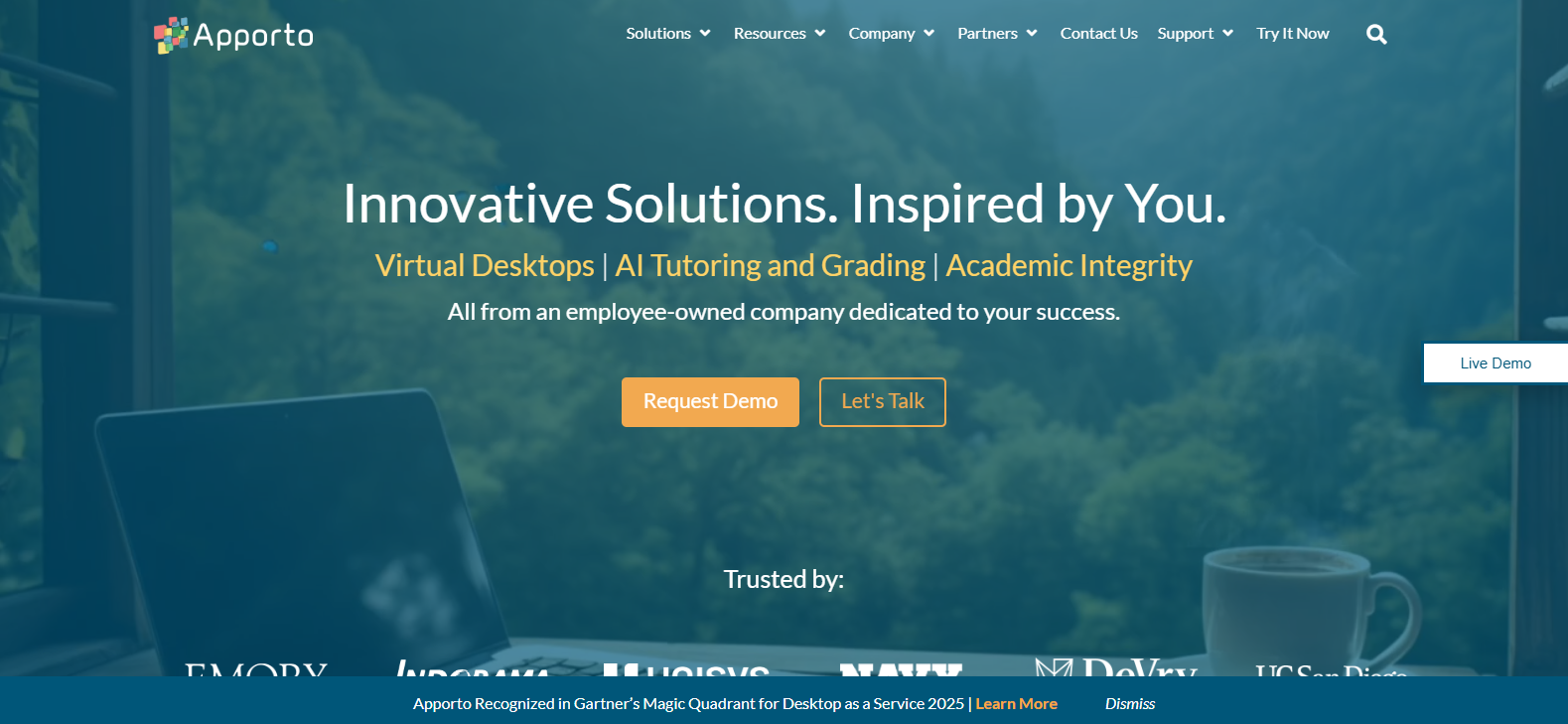

1. Apporto – Best Browser-Based VMware Alternative for Simplified Virtual Desktop Delivery

Overview

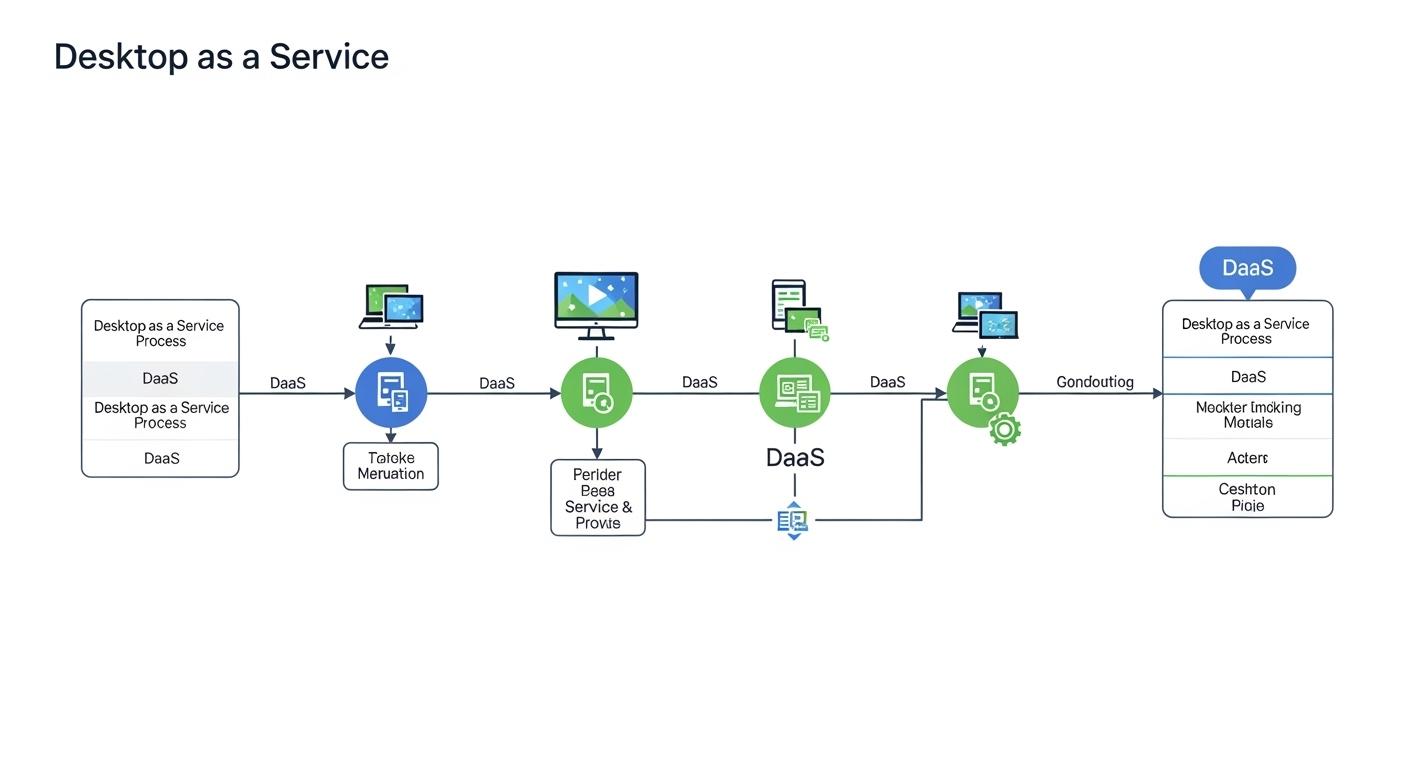

For organizations looking to reduce infrastructure complexity, Apporto offers a different approach from traditional virtualization platforms. Instead of requiring extensive on-premises infrastructure, client software, or complicated deployment processes, Apporto delivers virtual desktops directly through a web browser.

The platform is designed around simplicity, accessibility, and operational efficiency. Users can securely access applications and desktops from virtually any device without installing additional software. This browser-native approach helps reduce operational overhead while giving IT teams greater control over access, security, and resource utilization.

Unlike many VMware alternatives that focus primarily on managing virtual machines and underlying infrastructure, Apporto focuses on delivering a streamlined virtual desktop experience through cloud services and hybrid deployment options. For organizations seeking flexibility without adding management complexity, that distinction matters.

Key Features

Apporto is built to simplify virtual desktop delivery while maintaining enterprise-grade security and performance.

Browser-Native Access: Users can launch virtual desktops directly from a browser with no client installation required.

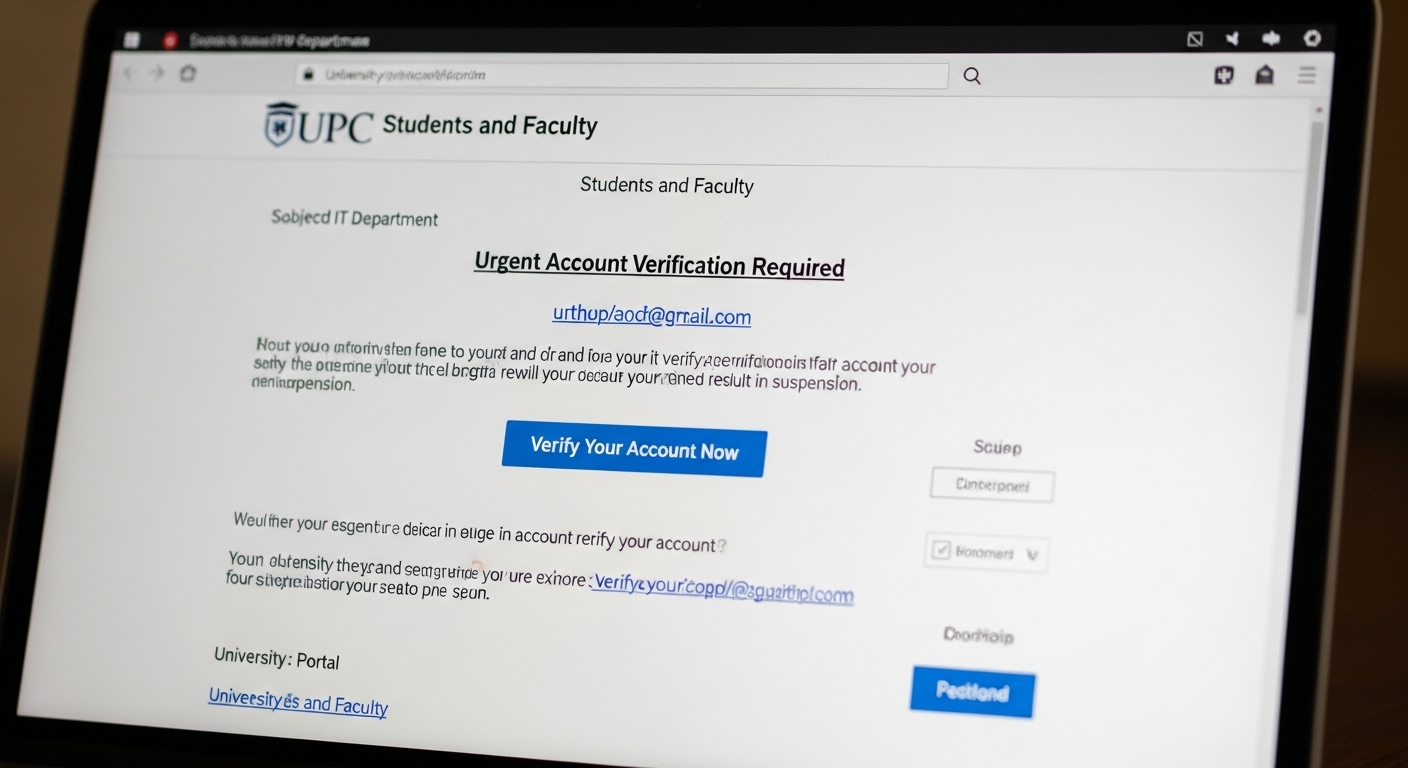

Zero Trust Security: Every user session is authenticated and secured, helping protect access across any location or device.

Rapid Deployment: Organizations can deploy virtual desktops significantly faster than many traditional VDI environments.

Centralized Management: Administrators can manage users, applications, and virtual resources through a unified interface.

Best For

Apporto is best suited for higher education institutions, midsize businesses, and distributed organizations that want secure virtual desktop access without the complexity of traditional VDI infrastructure. It is particularly valuable for environments supporting remote users, BYOD programs, or hybrid work initiatives.

Limitations

Organizations seeking deep hypervisor-level control, extensive virtualization customization, or infrastructure-focused virtualization management may find Apporto less flexible than platforms built specifically for managing virtual machines and data center environments.

Pricing

Apporto offers custom pricing based on deployment requirements, user counts, and infrastructure needs. Organizations must contact Apporto directly for a tailored quote.

2. Proxmox VE – Best Open-Source VMware Alternative for Infrastructure Flexibility

Overview

For organizations that want maximum control over their virtualization environment without paying enterprise licensing fees, Proxmox VE has become one of the most popular VMware alternatives available today. Built on open-source technologies, Proxmox combines virtualization, container management, networking, and storage into a single platform.

Its popularity has grown rapidly in recent years, particularly among organizations affected by VMware’s licensing changes and rising subscription costs. Many IT teams view Proxmox as a practical VMware replacement because it delivers core virtualization capabilities without requiring multiple products or complicated licensing agreements.

What makes Proxmox particularly compelling is its ability to manage both virtual machines and containers from the same platform. That flexibility allows organizations to support a wide range of workloads while keeping infrastructure management relatively straightforward.

Key Features

Proxmox brings together several infrastructure components that often require separate tools in other environments.

KVM Virtualization: Uses the proven KVM open source hypervisor to run enterprise-grade virtual machines.

LXC Containers: Provides lightweight container support alongside traditional virtualization workloads.

Integrated Ceph Storage: Offers distributed data storage capabilities for scalability and high availability.

Web-Based Management Interface: Delivers centralized administration through an intuitive web based management interface.

Best For

Proxmox VE is best for organizations seeking cost-effective virtualization software, service providers managing diverse workloads, and IT teams that value open technologies and infrastructure flexibility. It is particularly attractive to organizations looking to reduce licensing expenses while maintaining control over their environment.

Limitations

Although Proxmox offers impressive capabilities, some enterprise features may require additional configuration compared to commercial platforms. Organizations with limited Linux expertise may also face a steeper learning curve during deployment and ongoing management.

Pricing

The platform is free to use under its open-source licensing model. Paid support subscriptions are available for organizations that require enterprise support, updates, security patches, and access to the stable enterprise repository. Integrated backup and disaster recovery capabilities may also require additional infrastructure planning depending on deployment requirements.

3. Microsoft Hyper-V – Best VMware Alternative for Windows-Centric Organizations

Overview

If your infrastructure already runs heavily on Microsoft technologies, Microsoft Hyper-V is often one of the first VMware alternatives worth considering. As Microsoft’s native virtualization platform, Hyper-V is tightly integrated with Windows Server and the broader Microsoft ecosystem, making it a familiar option for many IT teams.

Unlike some VMware replacements that require adopting entirely new management models, Hyper-V allows organizations to leverage existing Microsoft investments while continuing to run virtualized workloads efficiently. The platform supports a wide range of guest operating systems, including Windows and Linux distributions, giving organizations flexibility as their infrastructure evolves.

One of Hyper-V’s biggest advantages is cost. Because it is included with Windows Server, many organizations can deploy virtualization capabilities without purchasing a separate hypervisor license. That can make a significant difference for businesses looking to control infrastructure spending while maintaining enterprise-grade functionality.

Key Features

Hyper-V combines virtualization capabilities with native Microsoft management and security tools.

Included with Windows Server: No separate hypervisor licensing is required for organizations already using Windows Server.

Live Migration: Move running virtual machines between hosts with minimal interruption to users and applications.

Windows Admin Center Integration: Simplifies management through a centralized interface for monitoring and administration.

Shielded VMs: Protect sensitive workloads using advanced security controls and encryption technologies.

Best For

Microsoft Hyper-V is best suited for organizations already invested in the Microsoft ecosystem, particularly those running Windows Server, Active Directory, SQL Server, and other Microsoft business applications.

Limitations

While Hyper-V offers strong virtualization capabilities, its ecosystem is not as extensive as VMware’s in some enterprise scenarios. Organizations with highly complex multi-cloud environments may also find fewer third-party integrations compared to certain alternative platforms.

Pricing

Microsoft Hyper-V is included with Windows Server, making it one of the more cost-effective virtualization options for existing Microsoft customers. Additional costs may arise from management tools, support services, backup solutions, or Windows Server licensing requirements.

4. Azure Stack HCI – Best VMware Alternative for Hybrid Cloud Infrastructure

Overview

For organizations that want to combine on-premises infrastructure with cloud capabilities, Azure Stack HCI offers a compelling VMware alternative. Developed by Microsoft, the platform is designed to bridge traditional data center operations with Azure cloud services, creating a more unified operating model for modern IT environments.

Azure Stack HCI focuses on hybrid infrastructure rather than standalone virtualization. It allows organizations to run workloads locally while taking advantage of cloud-based management, monitoring, security, and backup capabilities. This approach appeals to businesses that must maintain certain workloads on-premises while still benefiting from the flexibility offered by cloud providers.

As more organizations adopt hybrid strategies, Azure Stack HCI has become an increasingly attractive VMware replacement for companies already invested in Microsoft’s ecosystem. The platform also helps reduce the complexity often associated with managing separate cloud and on-premises environments.

Key Features

Azure Stack HCI combines virtualization, storage, and cloud connectivity into a single platform.

Azure Integration: Connects directly with Azure cloud services for monitoring, security, governance, and management.

Unified Control Plane: Provides centralized administration across both local infrastructure and cloud resources.

Hybrid Deployment: Supports workloads running across on-premises infrastructure and public cloud environments.

Disaster Recovery Tools: Includes built-in capabilities for backup, business continuity, and disaster recovery planning.

Best For

Azure Stack HCI is best suited for organizations pursuing hybrid cloud strategies, businesses already using Microsoft technologies, and IT teams looking to modernize infrastructure without fully migrating to cloud IaaS platforms.

Limitations

The platform delivers its greatest value when paired with Azure services. Organizations using multiple cloud providers or seeking a completely cloud-agnostic solution may find the Microsoft-centric approach somewhat restrictive. Subscription-based costs can also increase as additional Azure services are adopted.

Pricing

Azure Stack HCI uses a subscription-based pricing model tied to physical CPU core usage. Additional costs may apply for Azure services, storage, backup, networking, and security features depending on the deployment architecture and operational requirements.

5. Nutanix – Best Hyperconverged VMware Alternative for Simplified Infrastructure Management

Overview

Nutanix has established itself as one of the strongest enterprise VMware alternatives, particularly for organizations looking to simplify infrastructure management. Rather than treating compute, storage, and networking as separate systems that require multiple tools and layers of administration, Nutanix brings everything together into a unified hyperconverged infrastructure platform.

At the center of this approach is Nutanix AHV, the company’s built-in hypervisor. By eliminating the need for separate virtualization licensing, AHV helps organizations reduce costs while simplifying day-to-day operations. This integrated model has made Nutanix a popular choice for enterprises that want to reduce complexity without sacrificing performance or scalability.

Migration is another area where Nutanix has invested heavily. As many organizations evaluate alternatives following VMware licensing changes, tools like Nutanix Move have become valuable assets for planning and executing large-scale migration projects with minimal disruption.

Key Features

Nutanix focuses on consolidating infrastructure components into a simpler operational model.

Nutanix AHV: A built-in hypervisor that eliminates the need for separate virtualization software licenses.

Hyperconverged Infrastructure: Combines compute, storage, and networking into a single platform for easier management.

Nutanix Move: Provides automated migration assistance for organizations transitioning workloads from VMware environments.

Single Control Plane: Centralizes infrastructure administration, monitoring, and lifecycle management through a unified interface.

Best For

Nutanix is best suited for enterprises, midsize businesses, and IT teams seeking to reduce infrastructure complexity while supporting critical workloads across private, hybrid, and multi-site environments.

Limitations

While Nutanix simplifies management significantly, the platform often requires a larger upfront investment than open-source alternatives. Smaller organizations with limited budgets may find the cost difficult to justify if they don’t need the full breadth of enterprise capabilities.

Pricing

Nutanix uses custom pricing based on infrastructure size, hardware requirements, support levels, and software subscriptions. Organizations must work directly with Nutanix or a partner to receive a tailored quote based on their deployment needs.

6. Red Hat OpenShift Virtualization – Best VMware Alternative for Managing VMs and Containers Together

Overview

As application development continues to evolve, many organizations are finding themselves managing two worlds at once: traditional virtual machines and modern container workloads. Running separate platforms for each can increase complexity, create operational silos, and introduce unnecessary costs. Red Hat OpenShift Virtualization aims to solve that challenge.

Built directly into the OpenShift platform, OpenShift Virtualization allows organizations to run virtual machines alongside containers within the same environment. Instead of maintaining separate infrastructure stacks, IT teams can manage both workload types through a unified platform. That approach has made OpenShift an increasingly attractive VMware alternative for organizations modernizing their infrastructure without abandoning existing applications.

Another advantage is flexibility. Because OpenShift is built around open technologies and Kubernetes, organizations gain greater portability and reduce the risk of becoming locked into a single vendor’s ecosystem. For teams planning long-term infrastructure investments, that flexibility can be just as important as performance.

Key Features

OpenShift Virtualization is designed to bridge traditional virtualization with modern cloud-native infrastructure.

OpenShift Virtualization: Run virtual machines and container workloads side by side on the same platform.

Managed Kubernetes: Provides native Kubernetes capabilities for application orchestration and management.

Open Ecosystem: Uses open technologies that help organizations avoid vendor lock-in and improve workload portability.

REST API Support: Enables automation, integrations, and infrastructure management through a robust REST API.

Best For

Red Hat OpenShift Virtualization is best suited for enterprises pursuing modernization initiatives, development teams managing both containers and virtual machines, and organizations seeking alternative platforms that support hybrid infrastructure strategies.

Limitations

The platform can be more complex to deploy and manage than traditional virtualization solutions. Organizations without Kubernetes expertise may face a learning curve during implementation and ongoing administration.

Pricing

OpenShift Virtualization is licensed through Red Hat subscriptions, with pricing based on deployment size, support requirements, and infrastructure usage. Organizations typically work directly with Red Hat or certified partners to receive customized pricing estimates.

7. XCP-ng – Best VMware Alternative for Organizations Prioritizing Open Technologies

Overview

For organizations seeking an open-source VMware replacement without sacrificing enterprise functionality, XCP-ng has emerged as a compelling option. Built on the Xen Project hypervisor, XCP-ng provides a mature virtualization platform designed to support production workloads while avoiding many of the licensing concerns associated with commercial virtualization vendors.

Interest in XCP-ng has grown as more organizations explore alternatives to VMware. The platform combines a proven virtualization foundation with an active community and commercial support options, giving IT teams flexibility in how they deploy and manage infrastructure. Unlike some open-source projects that require extensive customization, XCP-ng delivers many core virtualization capabilities out of the box.

Another advantage is familiarity. Organizations migrating from VMware environments often find that XCP-ng provides many of the same key capabilities needed to manage virtualized workloads, including live migration, centralized administration, and workload protection. That makes the transition process less intimidating, particularly for teams looking to reduce costs without completely changing their operational model.

Key Features

XCP-ng focuses on delivering enterprise-grade virtualization through open technologies.

Xen-Based Hypervisor: Built on the proven Xen virtualization architecture used in enterprise and cloud environments worldwide.

Open Source Platform: Community-driven development provides transparency, flexibility, and freedom from proprietary licensing models.

Integrated Management Tools: Simplifies infrastructure administration through centralized management capabilities.

Migration Support: Provides tools and guidance to assist organizations transitioning workloads from VMware environments.

Best For

XCP-ng is best suited for organizations that prioritize open technologies, IT teams seeking lower licensing costs, and businesses looking for alternative platforms that maintain enterprise virtualization functionality without vendor lock-in.

Limitations

While XCP-ng continues to mature, its ecosystem remains smaller than some commercial competitors. Organizations heavily dependent on specialized third-party integrations may need additional planning before migrating critical workloads.

Pricing

The XCP-ng platform is free to use under its open-source licensing model. Commercial support, advanced management tools, training, and enterprise services are available through paid subscriptions for organizations requiring additional assistance and long-term support.

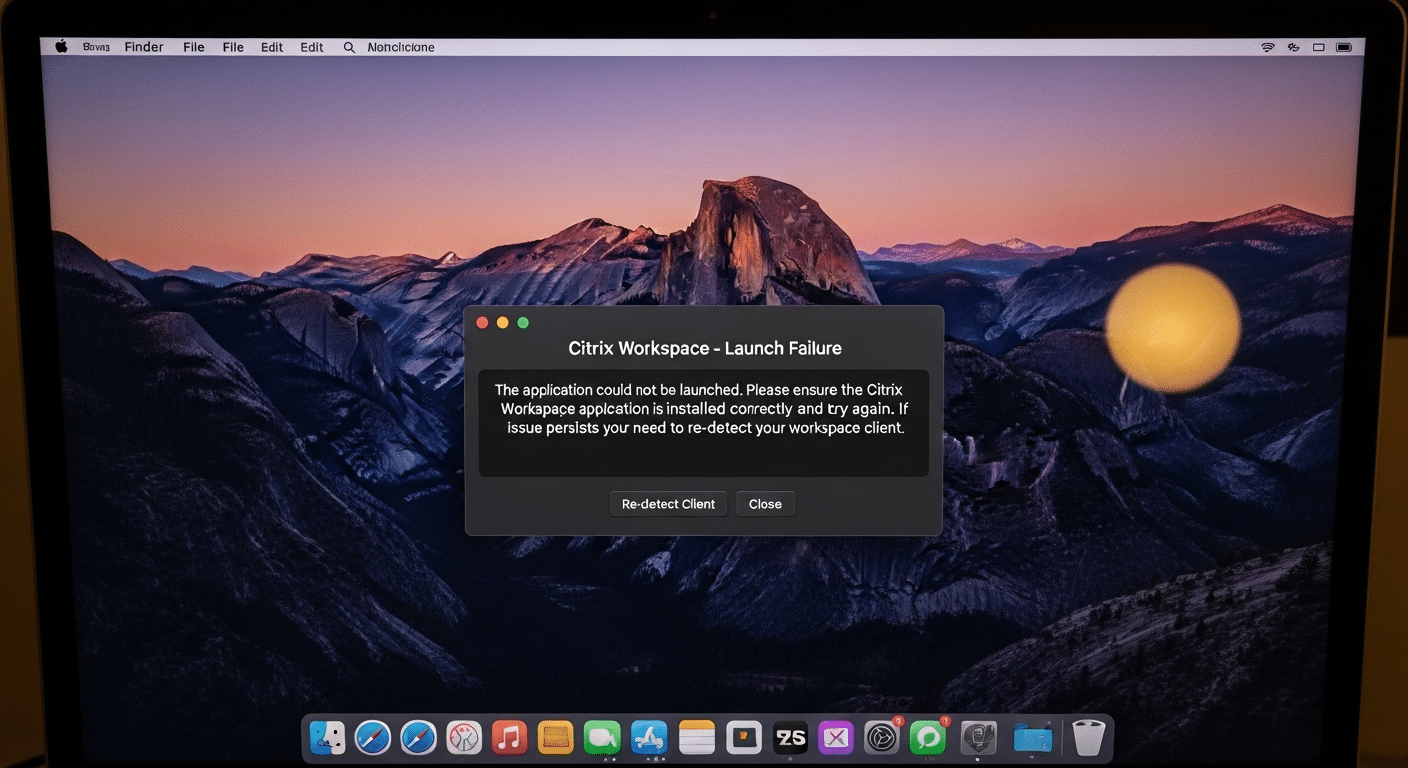

8. Citrix Hypervisor – Best VMware Alternative for Existing Citrix Environments

Overview

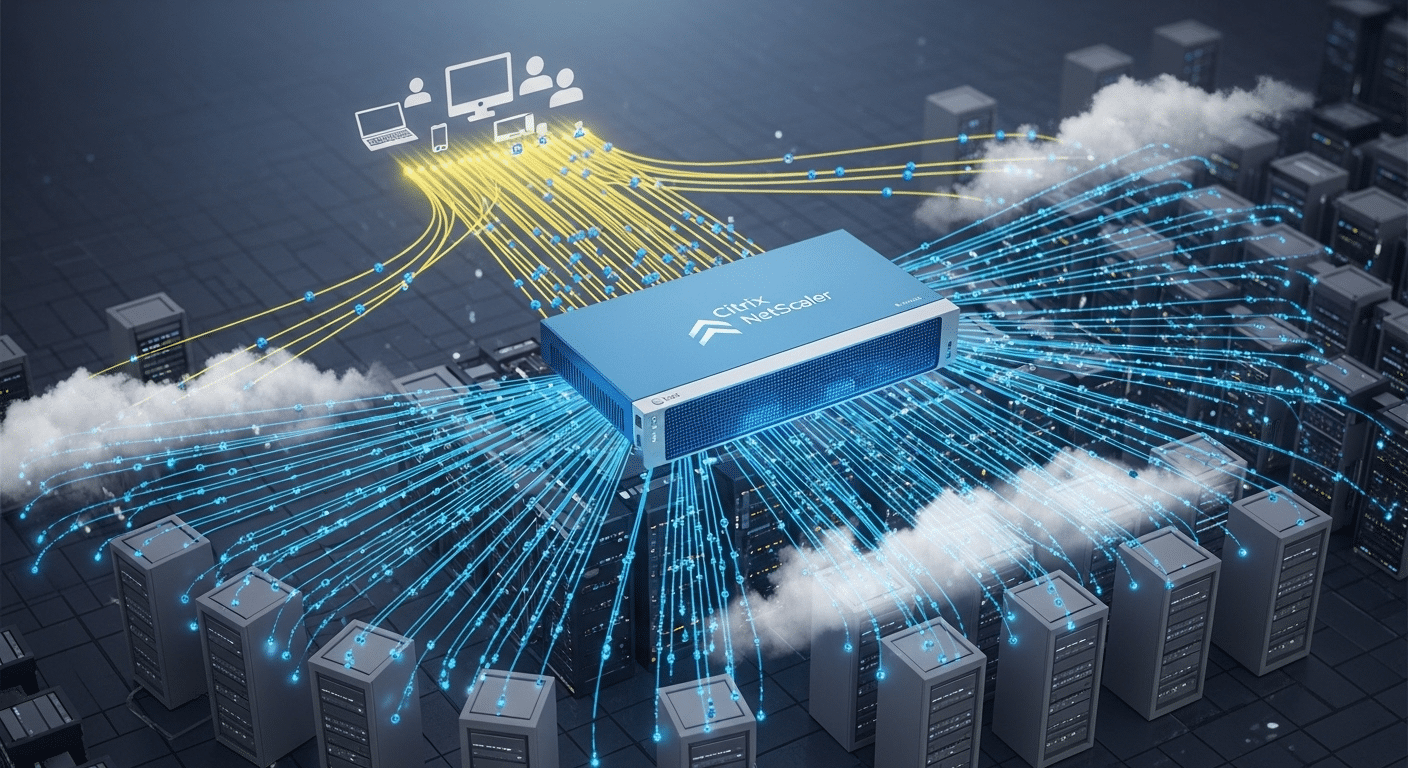

Organizations already invested in the Citrix ecosystem often look for a virtualization platform that integrates naturally with their existing infrastructure. Citrix Hypervisor, formerly known as XenServer, was built with that goal in mind. It provides a virtualization foundation designed to work closely with Citrix Virtual Apps and Desktops, making it a logical VMware alternative for organizations already using Citrix technologies.

The platform is based on the Xen hypervisor, a technology that has been widely used across enterprise and cloud environments for years. Citrix Hypervisor supports a broad range of workloads and offers many of the virtualization features organizations expect from enterprise-grade platforms, including live migration, workload balancing, and centralized management.

For businesses focused on delivering virtual desktops and applications, Citrix Hypervisor can help simplify infrastructure by keeping virtualization and end-user computing technologies within a familiar ecosystem. That tighter integration often reduces compatibility concerns and streamlines administration.

Key Features

Citrix Hypervisor focuses on enterprise virtualization and virtual desktop delivery.

Citrix Ecosystem Integration: Works closely with Citrix Virtual Apps and Desktops to simplify virtual desktop and application deployments.

High Availability: Helps maintain uptime and supports business continuity during infrastructure failures.

Resource Optimization: Improves resource utilization across hosts to help optimize performance and maximize infrastructure efficiency.

Enterprise Management Tools: Provides extensive administrative controls for managing virtual machines, storage, networking, and security policies.

Best For

Citrix Hypervisor is best suited for organizations already running Citrix Virtual Apps and Desktops, enterprises managing large virtual desktop deployments, and IT teams seeking deeper integration across their Citrix infrastructure.

Limitations

Organizations that are not already invested in the Citrix ecosystem may find fewer advantages compared to other VMware alternatives. Licensing structures can also be complex, particularly when combined with broader Citrix product suites.

Pricing

Citrix Hypervisor pricing varies depending on deployment size, feature requirements, support levels, and licensing agreements. Organizations typically need to work directly with Citrix or an authorized partner to receive a customized quote.

9. Oracle VM / Oracle Linux Virtualization Manager (OLVM) – Best VMware Alternative for Oracle Workloads

Overview

Not every organization needs a general-purpose VMware replacement. Some are primarily concerned with running Oracle databases, middleware, and business applications as efficiently as possible. In those environments, Oracle VM and Oracle Linux Virtualization Manager (OLVM) offer a purpose-built alternative designed to support Oracle-centric infrastructure.

OLVM is Oracle’s current virtualization platform and has become the strategic successor to Oracle VM for many deployments. Built on open-source technologies such as KVM, it provides enterprise virtualization capabilities while maintaining tight integration with Oracle’s software ecosystem. This alignment can simplify support, licensing discussions, and performance optimization for organizations that rely heavily on Oracle products.

For businesses running large Oracle environments, using a virtualization platform designed specifically for Oracle workloads can eliminate compatibility concerns and streamline administration. While it may not be the right fit for every organization, it remains a strong contender for enterprises seeking a target platform optimized for Oracle applications.

Key Features

Oracle’s virtualization offerings focus on performance, security, and integration across Oracle environments.

Oracle Optimization: Designed specifically for Oracle databases, enterprise applications, and business-critical workloads.

Enterprise Virtualization: Supports large-scale deployments with advanced features for performance, scalability, and workload management.

Integrated Management: Provides centralized administration for virtual machines, infrastructure resources, and operational policies.

Secure Boot Support: Enhances workload protection by verifying trusted software during the boot process and strengthening data protection measures.

Best For

Oracle VM and OLVM are best suited for enterprises running Oracle databases, Oracle Cloud integrations, ERP systems, and other Oracle applications that benefit from tight platform integration and vendor-aligned support.

Limitations

Organizations with highly diverse environments may find Oracle’s ecosystem-centric approach less flexible than broader virtualization platforms. Teams without significant Oracle investments may also struggle to justify adopting a platform optimized for a specific vendor stack.

Pricing

Oracle uses custom pricing models that vary based on support requirements, infrastructure size, software subscriptions, and deployment architecture. Organizations should work directly with Oracle to obtain accurate pricing and licensing information for their specific environment.

How Do You Choose the Right VMware Alternative for Your Environment?

Finding a VMware replacement is about more than comparing feature lists. The platform that works well for a global enterprise may be completely wrong for a midsize business with a small IT team. Likewise, the cheapest option isn’t always the most cost-effective once management overhead, support requirements, and future growth are factored in.

As you evaluate alternatives, focus on the areas that will have the biggest impact on your infrastructure over the next several years.

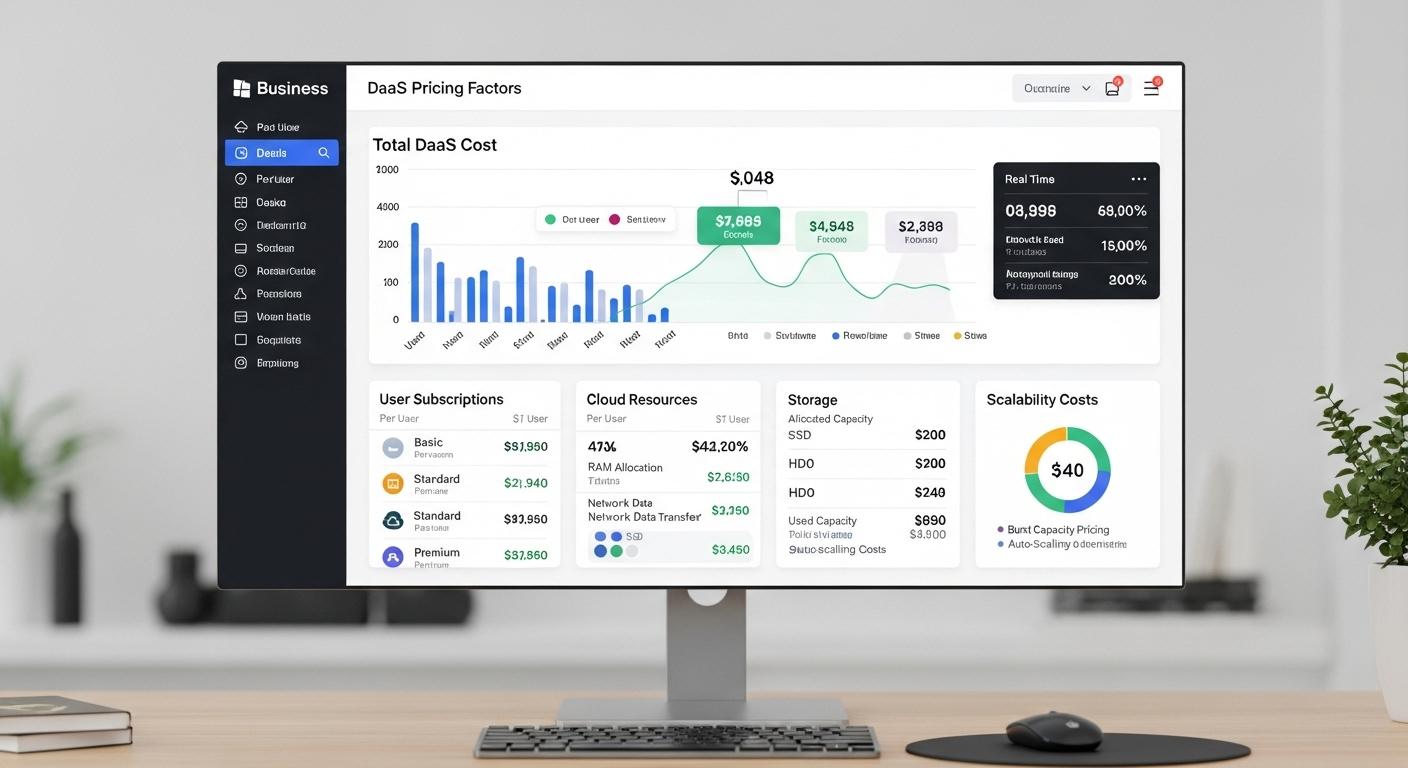

Licensing and Total Cost of Ownership

Licensing has become one of the primary reasons organizations are exploring alternatives to VMware. Following recent licensing changes, many businesses are taking a closer look at long-term costs rather than focusing solely on upfront expenses.

A platform with a lower purchase price may still introduce additional operational expenses through support contracts, third-party tools, or infrastructure requirements. Over time, those costs add up.

When evaluating alternatives, pay close attention to the following:

Consider Licensing Structure: Subscription-based licensing can provide flexibility, but it may also increase long-term operational expenses as workloads grow.

Evaluate Hidden Costs: Some platforms require additional products for backup, monitoring, disaster recovery, or advanced management features.

Review Scaling Costs: Understand how pricing changes as you add virtual machines, storage, or compute resources.

Analyze Support Requirements: Enterprise support contracts can significantly impact total ownership costs over time.

The most affordable platform on day one isn’t always the most economical five years later.

Infrastructure Complexity and IT Resources

Your internal IT resources should play a major role in the decision-making process. Some virtualization platforms offer extensive customization and advanced capabilities, but they also require more expertise to deploy and maintain.

Organizations with smaller IT teams often benefit from solutions that reduce administrative burden and simplify day-to-day operations.

Consider the following:

Small IT Teams: Integrated platforms such as Apporto or Nutanix can reduce complexity by consolidating management tools and simplifying infrastructure administration.

Large Enterprises: Organizations with dedicated infrastructure teams may benefit from platforms offering deeper customization and granular control.

Operational Model: Evaluate how much ongoing management each platform requires after deployment.

Automation Capabilities: Platforms that automate routine tasks can reduce manual effort and improve operational efficiency.

A solution that looks powerful on paper can become difficult to manage if it exceeds the capabilities of your team.

Cloud, Hybrid, or On-Premises Requirements

Infrastructure strategy matters. Some organizations are moving aggressively toward the cloud, while others must maintain workloads in private data centers for compliance, performance, or operational reasons.

The right VMware alternative should align with your long-term infrastructure goals rather than forcing you into an unwanted deployment model.

Key considerations include:

Cloud-First Organizations: Azure Stack HCI and Apporto offer strong options for businesses embracing cloud services and hybrid infrastructure.

Infrastructure-Heavy Environments: Nutanix and Proxmox provide greater flexibility for organizations maintaining significant on-premises resources.

Hybrid Deployments: Look for platforms that support seamless movement between on-premises infrastructure and cloud environments.

Future Growth: Select a solution that can scale computing resources, storage, and workloads without requiring a complete infrastructure redesign.

The best platform is often the one that supports where your business is heading, not where it happens to be today.

Migration Timeline and Risk Tolerance

One reality many organizations underestimate is the migration process itself. Replacing VMware rarely happens overnight. Depending on environment size and complexity, migrations can take anywhere from 18 to 48 months.

That makes planning just as important as platform selection.

Before making a decision, evaluate:

Migration Planning: Assess workload dependencies, application compatibility, infrastructure requirements, and staff training needs.

Minimal Downtime Requirements: Mission-critical applications may require phased migration approaches and extensive testing.

Live Migration Capabilities: Platforms supporting live migration can help reduce business disruption during transitions.

Disaster Recovery Readiness: Strong backup, recovery, and business continuity features can significantly lower migration risk.

A successful migration isn’t simply about moving workloads. It’s about maintaining stability, protecting users, and ensuring the new platform can support the organization for years to come.

What Are the Biggest Reasons Organizations Are Replacing VMware?

For many years, VMware was considered the default choice for enterprise virtualization. That reputation was built on a mature platform, broad ecosystem support, and extensive virtualization capabilities. Yet today, a growing number of organizations are actively evaluating alternatives.

The reasons vary from one business to another, but several common themes continue to emerge. Cost is certainly part of the conversation, but it isn’t the only factor. Many organizations are also reassessing complexity, flexibility, and long-term infrastructure strategy.

Here are some of the biggest drivers behind the growing interest in VMware alternatives.

Licensing Cost Increases: Following the Broadcom acquisition, many organizations have reported substantial increases in licensing and subscription costs. In some cases, businesses have seen costs rise significantly compared to previous agreements, prompting a reevaluation of their virtualization strategy.

Licensing Complexity: VMware environments often require multiple products, bundles, and tiered subscriptions to unlock desired functionality. This complexity can make budgeting more difficult and increase administrative burden for IT teams.

Operational Overhead: Managing VMware environments frequently involves additional tools for monitoring, backup, disaster recovery, security, and storage management. These extra components can increase operational overhead and require more time from already stretched IT teams.

Hidden Costs: Beyond licensing, organizations often encounter expenses related to support contracts, infrastructure upgrades, third-party integrations, and specialized expertise needed to maintain VMware deployments.

Desire for Open Technologies: Many organizations are exploring platforms built on open technologies such as KVM, Xen, and Kubernetes. These solutions can provide greater workload portability, reduce vendor dependency, and offer more flexibility for future infrastructure decisions.

As organizations look to replace VMware, the conversation is increasingly shifting toward simplicity, predictable operational expenses, and platforms that can adapt to changing business requirements without adding unnecessary complexity.

Final Thoughts

The best VMware alternative ultimately depends on your infrastructure goals and operational priorities. If you’re looking for browser-based virtual desktop delivery with minimal management overhead, Apporto stands out as the strongest option. For organizations prioritizing open-source flexibility and cost control, Proxmox VE remains one of the most compelling choices available today.

Microsoft Hyper-V is a natural fit for businesses deeply invested in the Microsoft ecosystem, while Azure Stack HCI delivers strong hybrid cloud capabilities for organizations balancing on-premises and cloud resources. If infrastructure simplification is the primary objective, Nutanix continues to lead the hyperconverged infrastructure category with its integrated management model and built-in AHV hypervisor.

Before making a decision, take a close look at your operational model, future growth plans, internal IT resources, and migration requirements. A platform that works well for one organization may introduce unnecessary complexity for another. The most successful VMware replacement projects are built on careful planning, realistic timelines, and a clear understanding of long-term infrastructure needs.

If you’re exploring a simpler way to deliver secure virtual desktops without the complexity of traditional VDI infrastructure, it’s worth taking a closer look at Apporto. Try Now.

Frequently Asked Questions (FAQs)

1. What is the best VMware alternative in 2026?

There is no single answer for every organization. Apporto is a strong choice for browser-based virtual desktops, Proxmox VE excels in open-source virtualization, Hyper-V fits Microsoft environments well, and Nutanix remains a leading hyperconverged infrastructure platform.

2. Why are organizations replacing VMware after the Broadcom acquisition?

Many organizations cite rising licensing costs, subscription-based pricing changes, and increased operational expenses as key reasons. Others are seeking simpler infrastructure management, more predictable pricing, and greater flexibility through open technologies and alternative virtualization platforms.

3. Is Proxmox VE a good replacement for VMware vSphere?

Yes, Proxmox VE is considered one of the most popular VMware alternatives. It combines KVM virtualization, LXC containers, integrated storage capabilities, and a web-based management interface while eliminating many licensing costs associated with VMware vSphere.

4. What VMware alternative works best with Windows Server?

Microsoft Hyper-V is typically the best choice for Windows Server environments. It integrates closely with Active Directory, Windows Admin Center, and other Microsoft technologies, allowing organizations to extend existing investments while simplifying infrastructure management.

5. Can VMware workloads be migrated without downtime?

In some cases, yes. Many platforms support live migration and workload mobility features that help minimize downtime. However, successful migrations depend on workload complexity, infrastructure design, application dependencies, and the overall migration strategy being implemented.

6. Are open-source VMware alternatives enterprise-ready?

Absolutely. Platforms such as Proxmox VE and XCP-ng are widely used in production environments. Open-source hypervisors have matured significantly and can support enterprise-grade virtualization, disaster recovery, high availability, and large-scale virtualized workloads.